Artificial Intelligence and Digitalization

For millennia, materials design relied on random discoveries and empirical rules — later refined into predictive theories and simulations. Today, this classical approach is being transformed: automation, advanced characterization, and simulations generate vast amounts of data, which we turn into insight. By combining materials science expertise with advanced data analysis and artificial intelligence, we move beyond collecting knowledge and use it to accelerate progress.

MPIE research addresses particularly the following challenges and chances:

1. Experiments such as Atom Probe Tomography or Scanning Transmission Electron Microscopy produce very large data sets (several GB-TB per experiment), that encode the inherent microstructural patterns of the investigated sample. However, such data scatters due to the omnipresent statistical fluctuations in real materials and due to unavoidable noise in the experiment. Similar challenges arise in computer simulations that employ stochastic sampling to cover high-dimensional parameter spaces. Big data analysis helps to reveal hidden patterns that emerge only when sufficient data is accumulated.

2. Our experimentalists know how to identify and interpret microstructural and other features, but usually spend much more time (100-1000x) in evaluating the data than in producing them. We work on developing tools for semi-automatic analysis, to empower scientists to focus on the exceptional aspects and to reduce human errors in routine tasks.

3. Data should be kept only if it is relevant and accessible. We therefore work on algorithms to filter for important data and reduce raw data storage by preprocessing. Providing proper metadata to track the history of the sample, measurement details, and data processing steps are crucial to ensure data quality, and to enable reuse of available data in new context. Our goal is to automatically attach and augment metadata by the tools we develop, and to make this metadata accessible to other automatic (meta)tools by linking to material science ontologies. For providing an infrastructure to create, manage, and analyse data in a highly automated fashion from day 1 (i.e. for scientists without prior data-science experience), we developed the pyiron framework.

4. Artificial intelligence methods can be trained to make predictions from data without building conventional scientific models. We see great chances in this, but not for replacing traditional science: rather, we explore how artificial intelligence may guide theoretical and experimental setups to the most interesting conditions for exciting discoveries – after all, 90-99% of all research efforts turn out to be unsuccessful, scientific dead-ends, or simply boring. Reducing that fraction by even a small amount will boost progress!

Max Planck team receives 1.5 million euros as part of the European project FULL-MAP

more

The general success of large language models (LLM) raises the question if they could be applied to accelerate materials science research and to discover novel sustainable materials. Especially, interdisciplinary research fields including materials science benefit from the LLMs capability to construct a tokenized vector representation of a large body of literature, larger than the literature any human could read in their lifetime.

more

Version 3.0 of the material simulation software suite DAMASK released

more

Scientists of the Max-Planck-Institut für Eisenforschung pioneer new machine learning model for corrosion-resistant alloy design. Their results are now published in the journal Science Advances

more

Dr. Ziyuan Rao leads the new research group “Artificial Intelligence for Materials Science” at MPIE

more

International research team publishes universal framework

more

Video explaining the digitalization in materials science

more

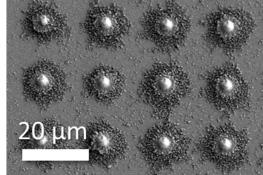

Statistical significance in materials science is a challenge that has been trying to overcome by miniaturization. However, this process is still limited to 4-5 tests per parameter variance, i.e. Size, orientation, grain size, composition, etc. as the process of fabricating pillars and testing has to be done one by one. With this project, we aim to fabricate arrays of well-defined and located particles that can be tested in an automated manner. With a statistically significant amount of samples tested per parameter variance, we expect to apply more complex statistical models and implement machine learning techniques to analyze this complex problem.

more

Atom probe tomography (APT) provides three dimensional(3D) chemical mapping of materials at sub nanometer spatial resolution. In this project, we develop machine-learning tools to facilitate the microstructure analysis of APT data sets in a well-controlled way.

more

Atom probe tomography (APT) is one of the MPIE’s key experiments for understanding the interplay of chemical composition in very complex microstructures down to the level of individual atoms. In APT, a needle-shaped specimen (tip diameter ≈100nm) is prepared from the material of interest and subjected to a high voltage. Additional voltage or laser pulses trigger the evaporation of single ions from the tip.

more

Ever since the discovery of electricity, chemical reactions occurring at the interface between a solid electrode and an aqueous solution have aroused great scientific interest, not least by the opportunity to influence and control the reactions by applying a voltage across the interface. Our current textbook knowledge is mostly based on mesoscopic concepts, i.e. effective models with empirical parameters, or focuses on individual reactions decoupled from the environment, presenting therefore a serious obstacle for predicting what happens at a particular interface under particular conditions.

more

Recent developments in experimental techniques and computer simulations provided the basis to achieve many of the breakthroughs in understanding materials down to the atomic scale. While extremely powerful, these techniques produce more and more complex data, forcing all departments to develop advanced data management and analysis tools as well as investing into software engineering expertise.

more

Integrated Computational Materials Engineering (ICME) is one of the emerging hot topics in Computational Materials Simulation during the last years. It aims at the integration of simulation tools at different length scales and along the processing chain to predict and optimize final component properties.

more

Data-rich experiments such as scanning transmission electron microscopy (STEM) provide large amounts of multi-dimensional raw data that encodes, via correlations or hierarchical patterns, much of the underlying materials physics. With modern instrumentation, data generation tends to be faster than human analysis, and the full information content is rarely extracted. We therefore work on automatizing these processes as well as on applying data-centric methods to unravel hidden patterns. At the same time, we aim at exploiting the insights from information extraction to direct the data acquisition to the most relevant aspects, and thereby avoid collecting huge amounts of redundant data.

more

The project’s goal is to synergize experimental phase transformations dynamics, observed via scanning transmission electron microscopy, with phase-field models that will enable us to learn the continuum description of complex material systems directly from experiment.

more

Max Planck researchers present a new deep neural network for predicting materials’ mechanical behaviour

more

German Federal Ministry of Education and Research supports digitization of materials research with 26 million euros

more

In order to prepare raw data from scanning transmission electron microscopy for analysis, pattern detection algorithms are developed that allow to identify automatically higher-order feature such as crystalline grains, lattice defects, etc. from atomically resolved measurements.

more

New product development in the steel industry nowadays requires faster development of the new alloys with increased complexity. Moreover, for these complex new steel grades, it is more challenging to control their properties during the process chain. This leads to more experimental testing, more plant trials and also higher rejections due to unmatched requirements. Therefore, the steel companies wish to have a sophisticated offline through process model to capture the microstructure and engineering property evolution during manufacturing.

more

Crystal Plasticity (CP) modeling [1] is a powerful and well established computational materials science tool to investigate mechanical structure–property relations in crystalline materials. It has been successfully applied to study diverse micromechanical phenomena ranging from strain hardening in single crystals to texture evolution in polycrystalline aggregates.

more

Show more